TL;DR:

DeepSeek R1 and Gemini-2.0 API are two strong AI contenders on Google Cloud Platform (GCP)—but how do they compare? In this third post of our series, we analyze independent benchmarks for speed, cost, and accuracy to help you determine the best fit for your business needs. Which model should you deploy?

With AI adoption accelerating, businesses are faced with a critical decision: Which LLM should power your applications?

For organizations using Google Cloud Platform (GCP), two strong contenders are:

- DeepSeek R1: An open-source LLM that offers flexibility and customization.

- Gemini-2.0 API: A proprietary, fully managed GCP model optimized for enterprise AI workloads.

Both models excel in different areas, but how do they compare in speed, cost, and accuracy? In this third post of our series, we’ve researched independent AI benchmarks (focusing on speed, cost, and accuracy) and compiled the latest data to help you choose the best fit for your business needs.

Let’s get into it.

Methodology: How We Tested

To ensure a fair, research-backed comparison, we analyzed independent benchmarks from various AI testing sources. Our evaluation includes:

Key Use Cases Compared

- Document Summarization: Assessing how well each model condenses long-form text while preserving key information.

- Code Generation: Evaluating the efficiency and accuracy of AI-generated Python scripts.

- Domain-Specific Question Answering: Measuring how well each model understands and responds to complex, industry-specific queries.

Core Performance Metrics

- Speed: Median response times from published AI latency benchmarks.

- Cost Efficiency: Cost comparisons based on infrastructure costs (Compute Engine), per-hour pricing (Vertex AI), and per-token fees (Gemini API).

- Accuracy: AI model evaluations, scored by human reviewers, focusing on reasoning, coding, and domain-specific knowledge.

This analysis is based on data from February 2025, with sources cited for full transparency.

Benchmark Results: Speed, Cost, and Accuracy

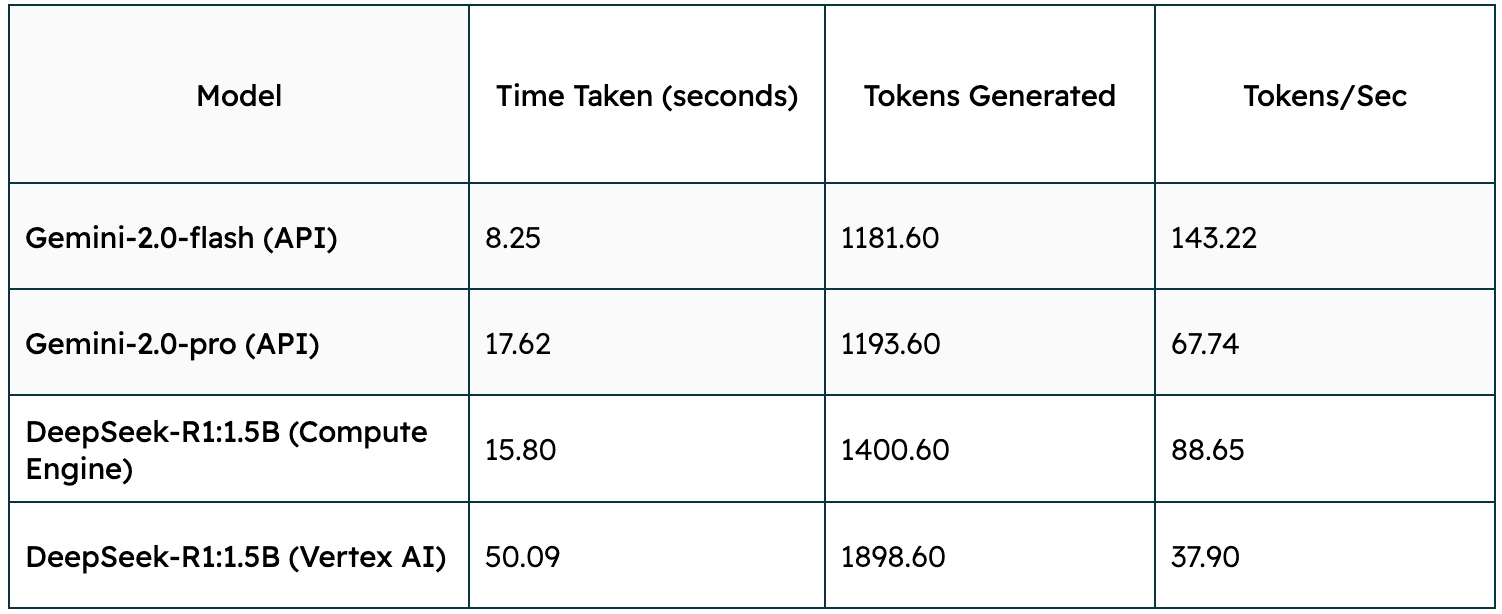

1. Speed Comparison

Evaluating the speed/latency of both models across various infrastructures.

Settings:

– Each candidate was prompted with, “Can you explain how neural networks work in simple terms?”

– The speed/latency was tested locally on Apple M2 16GB unified memory

-Note that the output of DeepSeek includes its “thinking” part

Key Takeaway: If speed is the priority, Gemini-2.0 Flash (API) is the clear winner. In contrast, DeepSeek R1 (Vertex AI) struggles with latency, making it less suitable for real-time applications unless managed services are a key priority. Gemini-2.0 Pro (API) provides a balanced alternative, trading off some speed for potentially higher output quality.

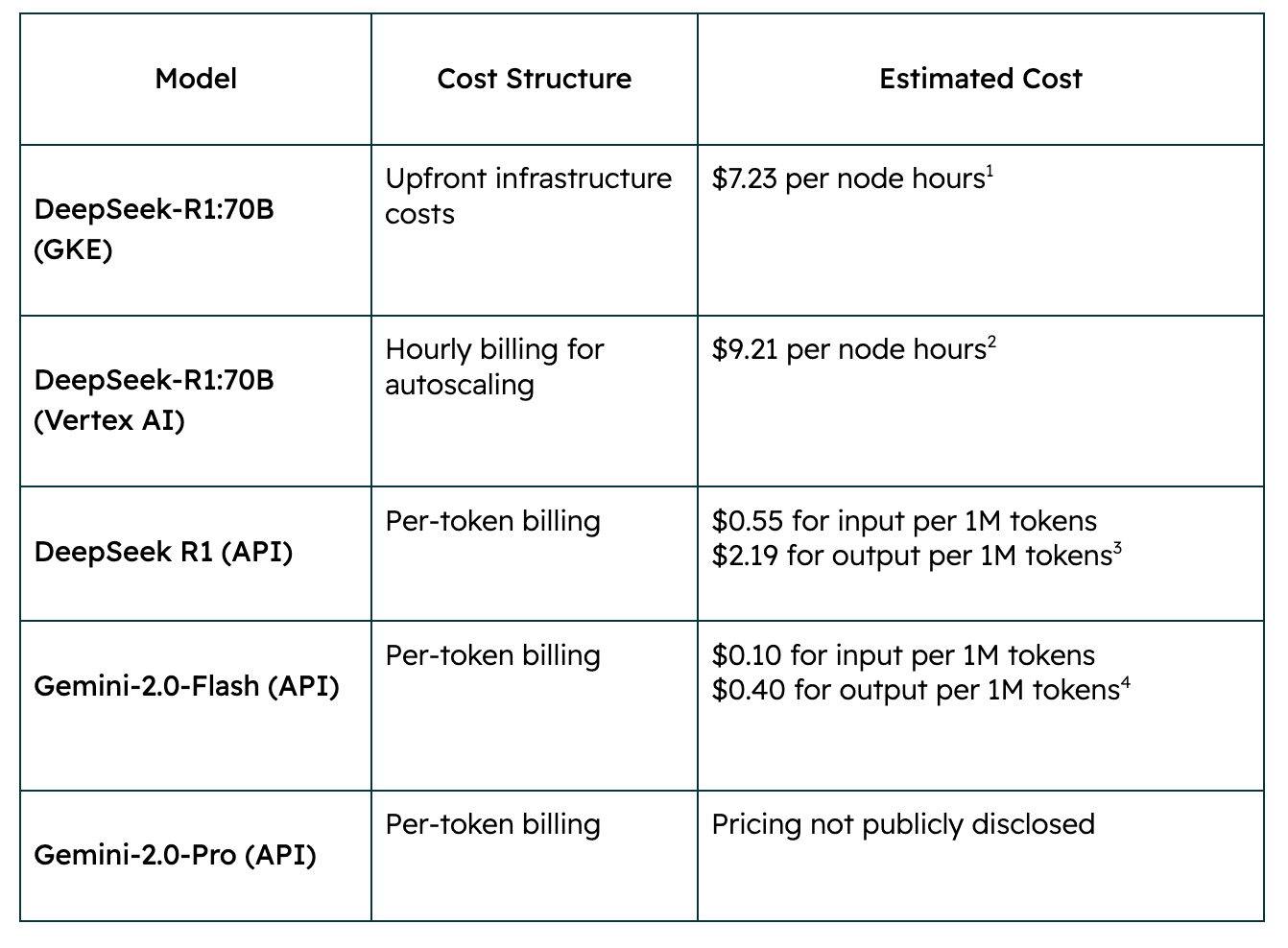

2. Cost Efficiency

Analysis of the cost structure of each model based on infrastructure and usage:

Key Takeaway: Deploying on VertexAI seems to be the most intimidating choice among all, and using the API might look the least costly. However, comparing the price across different infrastructures might not be sufficient for making a decision on the model and deployment method. Deploying in GKE equips users with the most flexibility in terms of network, infrastructure, and other customizations. Deploying in VertexAI provides a more friendly-to-use experience as it eases the users off on hectic infrastructure management but still accommodates some tailoring. Lastly, if the main goal is to quickly test/utilize the model within the development plan, using an API is a way to go.

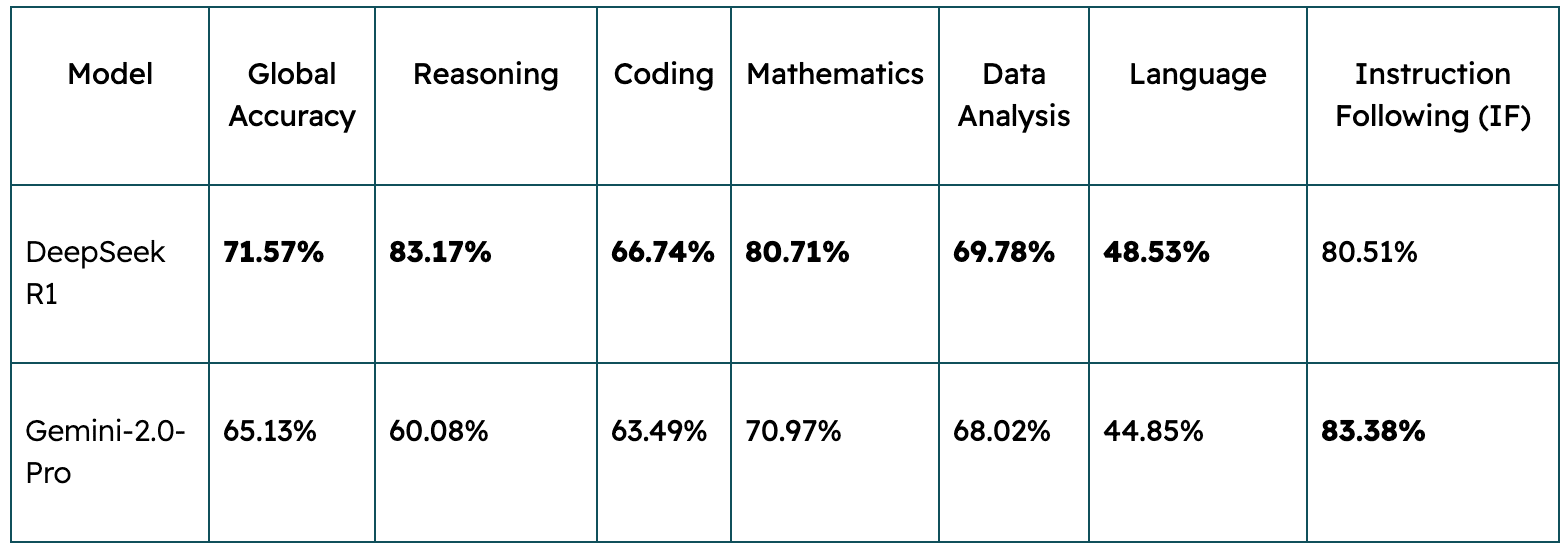

3. Accuracy/Performance Assessment

Recent benchmark tests have evaluated the performance of DeepSeek R1 and Gemini 2.0 Pro Experimental across various tasks. The results are as follows:

Source: LiveBench

Key Takeaway: DeepSeek R1 scores higher in reasoning, mathematics, and data analysis, making it better for research and logic-driven tasks. However, Gemini-2.0-Pro performs better in instruction-following (IF), making it strong for structured enterprise applications.

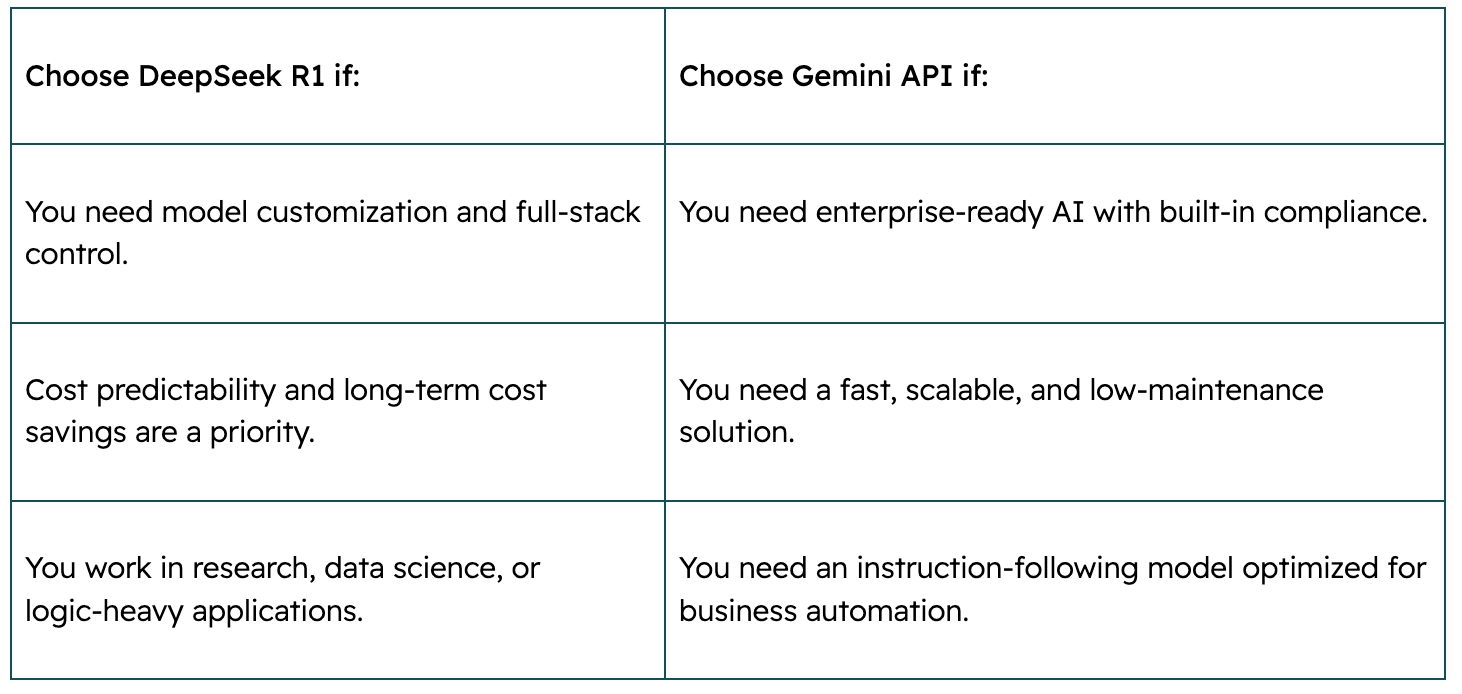

When to Choose Each Model

Choosing between DeepSeek R1 and Gemini API depends on your business needs, budget, and technical resources. Here’s a quick breakdown to help guide your decision:

Both models have unique strengths, and businesses can even combine them by using Gemini API for fast prototypes and DeepSeek R1 for custom AI pipelines.

GCP’s Sweet Spot: Flexibility and Choice

What makes Google Cloud Platform (GCP) a top AI deployment choice is its flexibility. Whether you choose DeepSeek R1 for open-source control or Gemini API for fully managed simplicity, GCP provides:

✅ Autoscaling on Vertex AI for dynamic workloads.

✅ Secure networking with VPC Service Controls to protect sensitive data.

✅ Hybrid AI solutions, allowing businesses to balance open-source and proprietary models

Looking Ahead

Balancing your AI model strategy is key to scaling efficiently. By benchmarking models like DeepSeek R1 and Gemini API, you can make informed decisions about deployment, costs, and performance.

Want to explore which AI solution is the right fit for your business? Schedule a consultation (via the form below) with us today, and we’ll handle the next step.

Wirit Khongcharoen

Machine Learning Engineer

Chitipat Trachu

Data Engineer

Schedule a consultation

Embrace the power of secure cloud and AI solutions with Tridorian. Reach out to learn how we can make a difference.